Origin Lab Raises $8M Seed to Turn Video Games Into AI Infrastructure

AI labs spent the last 3 years devouring the internet like raccoons behind a casino buffet. Tweets. Reddit threads. YouTube transcripts. Pirated books. Entire corners of human civilization scraped into giant statistical soup. That strategy worked long enough to produce language models capable of writing wedding vows, debugging Python, and hallucinating legal cases with the confidence of a mediocre middle manager. Now the industry wants something harder: reasoning, spatial awareness, environmental understanding, and cause and effect.

That shift explains why San Francisco-based Origin Lab just raised $8M in Seed funding led by Lightspeed Venture Partners, with participation from SV Angel, Eniac Ventures, FPV Ventures, Seven Stars, and angel investors including Twitch co-founder Kevin Lin and Cruise founder Kyle Vogt. Origin Lab is building a platform that converts licensed video game worlds into structured multimodal training data for AI systems. Not scraped gameplay clips or random Twitch footage stripped of context, but licensed environments paired with metadata around movement, camera positioning, player inputs, environmental state, and interaction dynamics. The broader implication is becoming difficult to ignore: AI’s next phase may depend less on bigger models and more on better worlds.

What Happened

Origin Lab announced an $8M Seed round to expand its platform for creating structured AI training datasets derived from licensed gaming environments. The company was founded by Anne-Margot Rodde, Colin Carrier, and Antoine Gargot, with leadership backgrounds tied to gaming, AI, and interactive systems. The startup says it has already secured partnerships with more than 20 game publishers spanning 50+ titles, transforming those environments into multimodal datasets designed for frontier AI labs building world models, embodied AI systems, robotics platforms, and simulation-driven intelligence architectures.

That distinction matters because large language models became commercially viable through internet-scale text data, while world models require environments where actions produce consistent outcomes, physics remains stable, and interaction chains can be measured with precision. Video games accidentally became one of the richest simulation layers ever built. For decades, the gaming industry invested billions creating digital worlds capable of modeling movement, economies, spatial navigation, resource systems, behavioral patterns, conflict resolution, and collaborative interaction. Most of that infrastructure was designed for entertainment. AI labs now view it as training terrain, and Origin Lab positioned itself directly inside that transition.

Why Origin Lab Matters

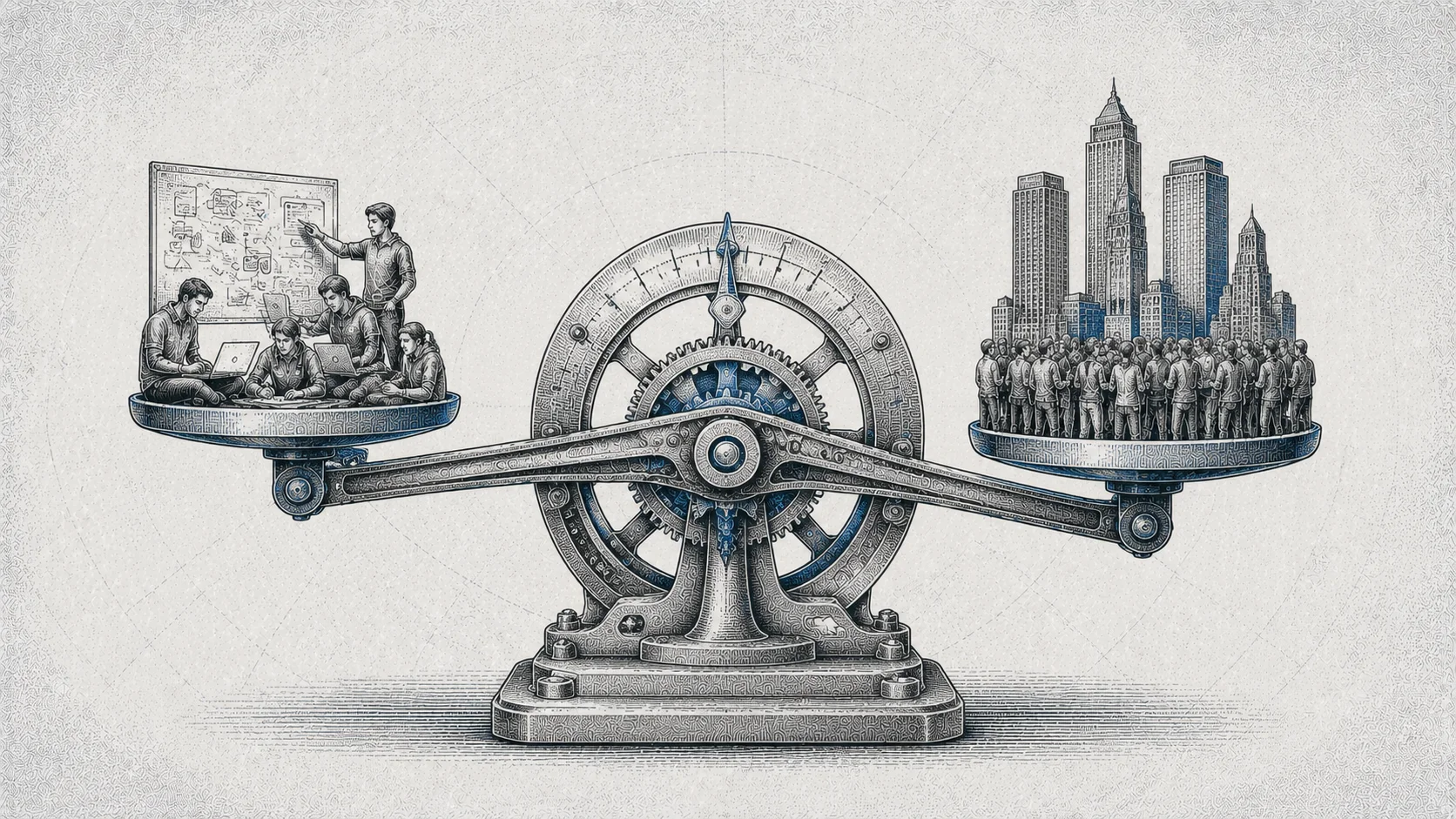

The AI market is quietly entering a new resource war. The first era centered around compute, where Nvidia became the gravitational center of modern technology because GPUs turned into the industrial backbone of machine learning. The second era centered around foundation models themselves, with OpenAI, Anthropic, Google DeepMind, and Mistral competing on scale, inference quality, and distribution. The next era increasingly revolves around proprietary data ecosystems, which changes the economics dramatically.

Public internet data is becoming saturated, legally contested, and operationally noisy. Training frontier AI systems on uncontrolled scraped content creates quality issues, licensing risk, and inconsistent environmental logic. Models trained exclusively on internet-scale text often understand language better than they understand reality. Origin Lab’s pitch is deceptively simple: licensed digital environments create cleaner intelligence signals. Inside a structured game world, movement obeys rules, interactions produce measurable consequences, spatial relationships remain coherent, and environmental variables can be tracked frame by frame. That consistency becomes extremely valuable for AI systems attempting to learn navigation, planning, simulation, robotics reasoning, or embodied interaction.

Market Context

The rise of world models has become one of the most important conversations inside frontier AI research. Companies like World Labs, Physical Intelligence, Figure AI, and robotics-focused startups are pushing beyond pure text generation toward systems capable of understanding physical and simulated environments. Autonomous agents, robotics platforms, simulation engines, and multimodal reasoning systems all require richer environmental training signals than traditional internet scraping can provide. That creates demand for structured multimodal data.

Origin Lab is not competing directly with OpenAI or Anthropic. It is operating one layer beneath them in the infrastructure stack, and that position may ultimately prove more durable than people realize. Infrastructure companies often become the quiet winners of platform shifts because they supply the picks and shovels instead of fighting for consumer attention. Scale AI demonstrated this dynamic during the supervised learning boom. Nvidia demonstrated it during the GPU acceleration era. Origin Lab appears to be betting that world-model development creates an entirely new infrastructure category around licensed synthetic environments and structured simulation data.

Competitive Landscape

Origin Lab occupies an unusual intersection between gaming infrastructure, AI data pipelines, and simulation tooling. That positioning creates strategic advantages because gaming publishers possess massive libraries of underutilized interactive environments while AI labs possess growing demand for structured world data. Origin Lab acts as the connective tissue between those ecosystems while emphasizing rights-cleared licensing instead of scraped content acquisition. That distinction may become increasingly important as regulatory scrutiny around AI training data intensifies globally.

The company also benefits from timing within the gaming industry itself. Game studios continue searching for new monetization channels beyond traditional publishing economics, and licensing virtual environments for AI training introduces a new revenue layer tied to assets publishers already own. In practical terms, Origin Lab is turning digital worlds into data infrastructure. That sentence would have sounded ridiculous 5 years ago. Today it sounds financially inevitable.

What This Signals

Origin Lab’s funding round signals a broader shift happening across AI infrastructure markets: high-quality proprietary data is becoming strategically scarce. The assumption that “more internet equals better AI” is starting to crack under pressure because frontier AI systems increasingly require controlled environments capable of teaching interaction dynamics, environmental reasoning, spatial navigation, and consequence modeling. That pushes value toward companies controlling unique datasets with licensing clarity and structural consistency. Gaming environments happen to satisfy those requirements unusually well.

This also reflects a broader convergence between industries that historically operated independently. Gaming, robotics, enterprise AI, autonomous systems, simulation infrastructure, and multimodal research are beginning to overlap operationally, financially, and strategically. That convergence creates entirely new market categories, and Origin Lab recognized the opening early enough to matter.

Frequently Asked Questions

What is Origin Lab?

Origin Lab is a San Francisco-based AI infrastructure company that converts licensed video game worlds into structured multimodal training data for world models and embodied AI systems.

How much funding did Origin Lab raise?

Origin Lab raised $8M in Seed funding led by Lightspeed Venture Partners.

Who invested in Origin Lab?

Investors include Lightspeed Venture Partners, SV Angel, Eniac Ventures, FPV Ventures, Seven Stars, Kevin Lin, and Kyle Vogt.

What does Origin Lab sell?

Origin Lab provides licensed, structured AI training datasets derived from video game environments, including gameplay data, spatial interactions, camera movement, and environmental metadata.

Why are video games valuable for AI training?

Video games provide controlled environments with stable physics, measurable interactions, spatial consistency, and structured behavioral data that help train world models and embodied AI systems.

Why does this funding round matter?

The funding reflects growing demand for proprietary multimodal datasets as AI labs shift toward systems that understand environments, simulation, and real-world interaction rather than only text generation.