Voice AI Infrastructure Hits a Decision Point as Vapi Hosts April 28 Architecture Session

About This Event

Voice AI has moved past the demo phase and into operational reality, where performance is measured under pressure, not applause. Systems that once handled controlled interactions are now expected to operate across thousands of concurrent conversations, where latency, cost, and reliability expose every architectural shortcut. What looked efficient in early builds starts to strain at scale. The core question facing teams is no longer what their voice product can do, but whether the foundation it runs on can hold. This is where SaaS shifts from interface to infrastructure, and every underlying decision starts to carry weight.

April 28 lands right in that pressure pocket. “Integrated or Open Stack? How to Architect Voice AI That Scales,” presented by Vapi and hosted under Vapi Events, is not a vibe session. It is a decision room. A 1-hour, 10:00–11:00 AM PT virtual session on Luma where the conversation moves from what voice AI can do to what it can sustain. Vapi and Cartesia are not showing off tricks. They are interrogating the plumbing. Fully packaged versus open and modular is not a philosophical debate here. It is a line item on margin, uptime, and the ability to pivot as models evolve. For anyone building inside SaaS, this is the layer where product velocity meets architectural debt.

Picture the room, even if it is digital. Builders who have already shipped something that talks. Product leaders who promised conversational interfaces and now have to keep them coherent under load. Engineering leads who understand that every millisecond compounds and every dependency becomes a negotiation. The audience spans AI engineers, product leaders, enterprise architects, plus CX and operations executives evaluating voice for real workflows. This is not a tourist crowd. It is people who have felt systems break and want fewer surprises the next time.

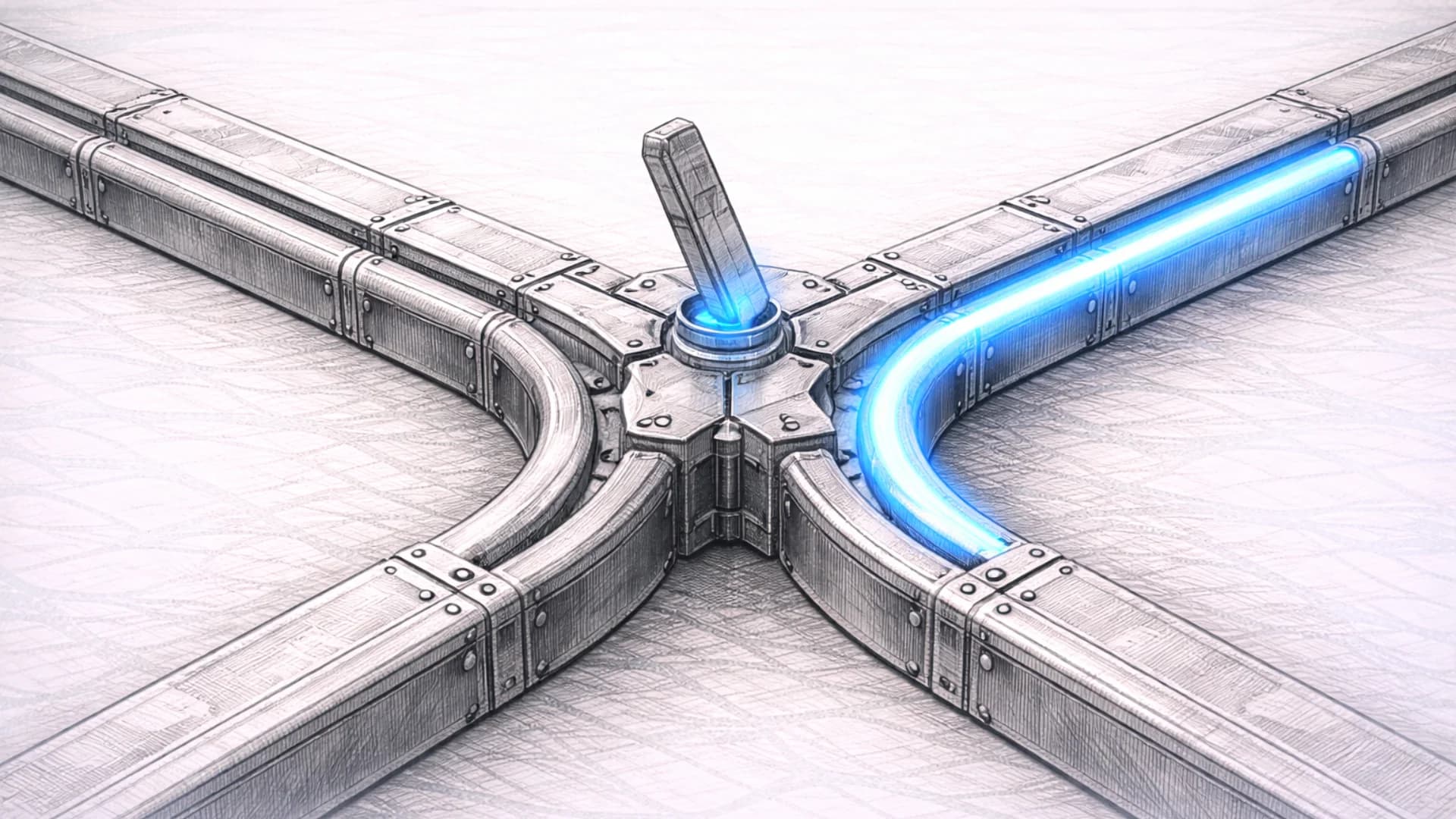

Nick Robin brings Vapi’s vantage point from the infrastructure layer, where patterns repeat whether teams acknowledge them or not. Israel Shalom represents Cartesia from the model layer, where performance is conditional and the “best” choice shifts with use case, audience, and acoustic environment. Together they map tradeoffs across the three layers that define every voice interaction: speech recognition, language understanding, and speech synthesis. Each layer moves on its own timeline. Each introduces its own failure modes. And each decision compounds across the stack.

What they are really offering is a framework. Fully packaged systems reduce integration overhead and simplify vendor relationships. Open, modular stacks introduce complexity but allow teams to swap components as the model landscape changes. The session goes further, outlining practical questions to ask any provider before switching costs lock in, and showing how production teams structure systems so components remain independently upgradeable. In a market where SaaS vendors increasingly blur into infrastructure providers, those questions carry weight far beyond engineering.

The shift here is cultural as much as technical. Voice is no longer a novelty channel. It is becoming a primary enterprise interface because it compresses friction across both internal operations and customer experience. That compression puts stress on everything underneath. Separation of concerns stops being theoretical. It becomes operational leverage. Teams that locked their stack early are already feeling the constraint. The ones designing for adaptability are buying optionality in a market that keeps moving.

A year ago, the conversation was about what voice agents could say. Now it is about how systems behave under pressure, how they fail, and how quickly they recover. This session meets that moment directly. It gives founders language to explain their architecture as strategy. It gives operators a way to evaluate vendors before dependency becomes risk. It gives decision-makers inside SaaS organizations a clearer view of where control sits in the stack and where it quietly slips away.

There is a pattern in every infrastructure cycle. Early momentum rewards speed. Later stages reward discipline. Voice AI is crossing that line now. April 28 is not about choosing a side. It is about understanding the cost of the choice before it compounds, and recognizing that in this layer of the stack, flexibility is not a feature. It is the only way to stay in the game.