The Struggle to Regulate AI: Washington’s Fight Over Federal Preemption, State Laws, and AI Power

About This Event

Washington didn’t host a conversation on AI that day, it exposed a fault line. The May 1 WP Intelligence briefing carried the energy of a system mid-transition, where AI stopped behaving like a policy topic and started operating like a live power contest. Not theoretical, not future-facing, but unfolding in real time between the White House, Congress, and a growing bloc of states that are done waiting for federal clarity. What emerged over the course of the conversation was not a clean storyline, but a layered collision of incentives where speed, control, and risk are all being renegotiated at once, and no one serious is pretending otherwise.

The stage itself told you everything about the moment. Luiza Savage, editorial director of WP Intelligence, opened and anchored the discussion with a clear line of sight into both policy and market impact. Benjamin Guggenheim, author of the AI & Tech Brief newsletter, brought the pulse of Washington’s day-to-day negotiations into focus. Dean Ball, senior fellow at the Foundation for American Innovation and former senior policy adviser for artificial intelligence at the White House Office of Science and Technology Policy, connected those negotiations to the machinery behind them. Rebecca Adams, WP Intelligence lead health care analyst, extended the conversation into one of the most regulated and consequential sectors in the economy. The sequence mattered because it mirrored the flow of power, from federal intent to legislative friction to real-world implementation.

The White House position, as outlined early, is disciplined in tone but ambitious in scope. A national, light-touch framework designed to prevent fragmentation before it hardens into something unmanageable. It reads like efficiency, but it operates like a preemptive strike against regulatory sprawl. The logic is straightforward. If fifty states write fifty versions of AI law, the market slows down, and the smallest players feel it first. So the administration is attempting to draw the perimeter early, pairing innovation-friendly posture with targeted protections around deepfakes, children’s exposure, intellectual property, and the infrastructure required to support AI at scale. It is a controlled message built for momentum.

That control begins to slip the moment reality enters the chat. The introduction of models capable of identifying vulnerabilities across critical systems did not just raise concerns, it changed the jurisdiction of the conversation. AI stopped being something you regulate within tech and became something that touches finance, energy, and national security simultaneously. Once that shift happens, the room expands. New actors step in, not because they want to, but because they have to. And with them comes a different posture. Less tolerance for ambiguity, more focus on containment, coordination, and consequence.

What follows is not a clean pivot but a visible recalibration. The idea of unrestricted deployment starts to give way to staged access. Distribution becomes deliberate. Access becomes conditional. You can avoid calling it control, but functionally, that is where the system begins to move. The implication is not abstract. It defines who gets to build, who gets to test, and who gets to deploy at scale before the rest of the market catches up. That is not just policy. That is leverage.

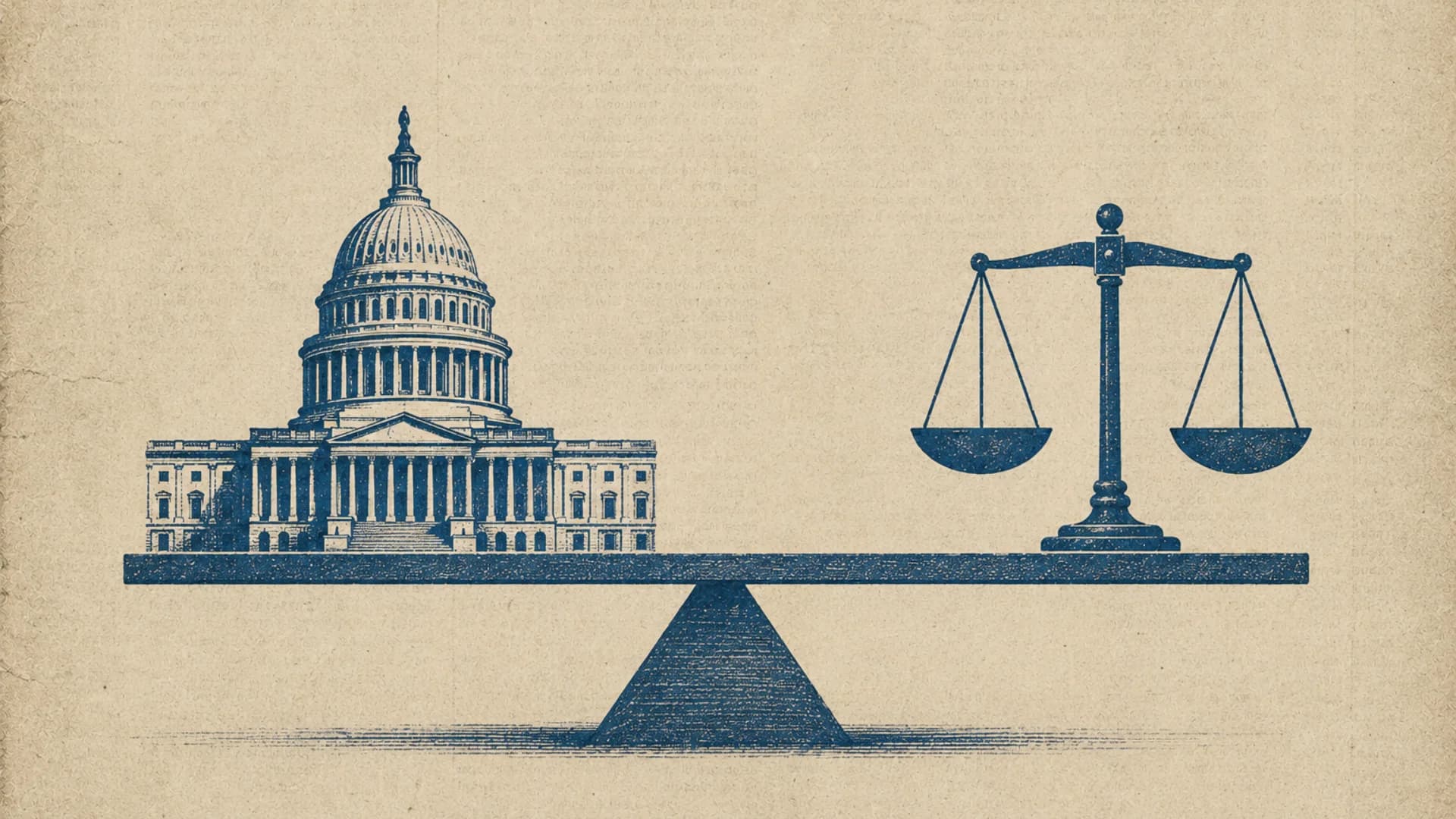

Congress, as expected, does not simplify the picture. It complicates it in ways that reflect the broader market. The divisions are not cleanly partisan. They run through both sides, shaped by competing pressures that refuse to resolve neatly. Safety, innovation, national security, and global competitiveness all demand attention, but they do not align on timeline or priority. The result is a legislative environment defined less by resolution and more by negotiation. Progress is possible, but it will not be linear, and it will not be fast. Any meaningful movement is likely to ride alongside larger legislative vehicles, where urgency meets political reality.

While Washington debates structure, the states are already shaping behavior. Nowhere is that more visible than in healthcare, where authority is localized and consequences are immediate. States are not trying to define AI in its entirety. They are carving out the parts they can control and acting with precision. Human oversight in clinical decisions, limits on AI-driven patient interaction, and reinforcement of professional boundaries are not symbolic moves. They are operational constraints that directly affect how AI is deployed on the ground. At the same time, federal agencies are encouraging adoption through funding and policy support, creating a dual-track environment where acceleration and restriction coexist without reconciliation.

That duality is the current operating system. AI is being pushed forward and held back at the same time, depending on where you stand. For companies navigating this, the takeaway is not to wait for clarity, because clarity is not arriving in a single moment. It is being formed through use. The strongest signal from the conversation was not caution, but action. Deploy where you can, learn quickly, and engage legal frameworks alongside product development. The organizations that move now are not just adapting to regulation. They are informing how it is interpreted.

What made the session land was not just the content, but the composition. This was not a theoretical panel. It was a convergence of people who influence, interpret, and implement policy across different layers of the system. That mix created a working dynamic where insights built on each other instead of sitting in isolation. The value extended beyond the hour itself, carrying into the follow-on conversations where alignment begins to form and strategy takes shape.

Nothing about the discussion suggested finality. If anything, it reinforced how early this still is. AI governance in the United States is not being delivered as a finished framework. It is emerging through overlapping decisions, shaped by political incentives, technological capability, and real-world deployment. The people paying attention are not waiting for a definitive answer. They are tracking how influence is distributed, how priorities are shifting, and where the next inflection point will land.