Nexthop AI Raises $500M in Series B Funding to Expand AI Networking Infrastructure

Funding Details

$500M

Series B

AI runs on compute, but compute moves on networks. And right now those networks are under more pressure than a data center power bill in August. That tension is exactly where Nexthop AI decided to build. Not chasing headlines. Not selling hype. Just engineering the connective tissue that lets massive AI clusters actually function at hyperscale.

Santa Clara based Nexthop AI just secured a $500M Series B that came in oversubscribed and pinned the company at a $4.2B valuation. Lightspeed Venture Partners led the round with Andreessen Horowitz, Altimeter, Kleiner Perkins, WestBridge Capital, Battery Ventures, and Emergent Ventures stepping in with conviction. That pushes total funding to $610M since Nexthop AI emerged from stealth in 2025 with a $110M launch round.

Behind the build is Founder and CEO Anshul Sadana, the former COO of Arista Networks and someone who understands the guts of cloud networking the way a master mechanic understands an engine block. When Anshul Sadana decided the next chapter of AI infrastructure needed a faster lane, investors did not hesitate to ride shotgun.

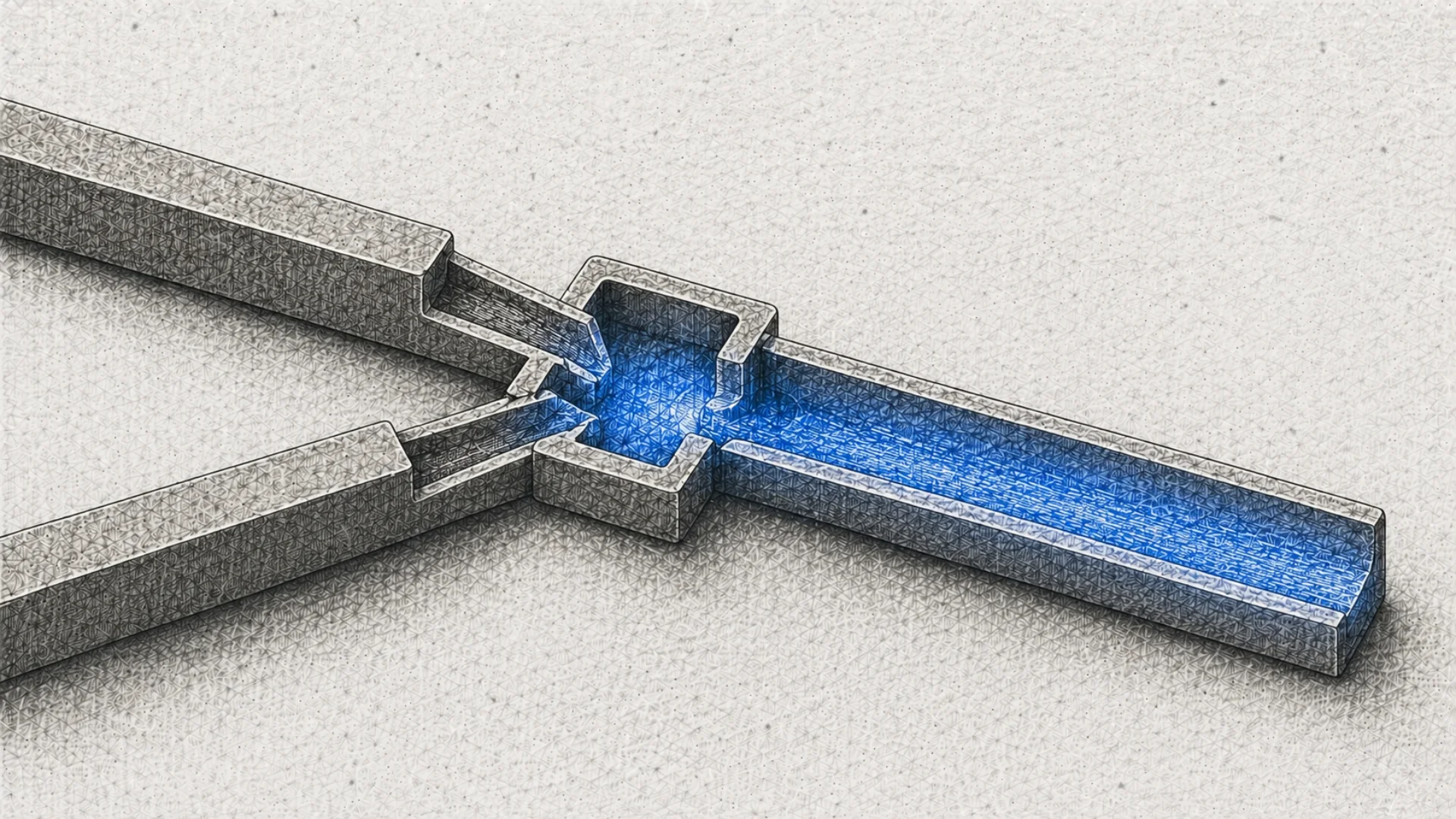

Here is the part most people miss about the AI boom. The spotlight sits on GPUs, models, and trillion parameter bragging rights. Meanwhile thousands of machines are trying to talk to each other at once across massive clusters, moving oceans of data every second. If the network cannot keep up, the whole system crawls. Nexthop AI is focused precisely on that pressure point.

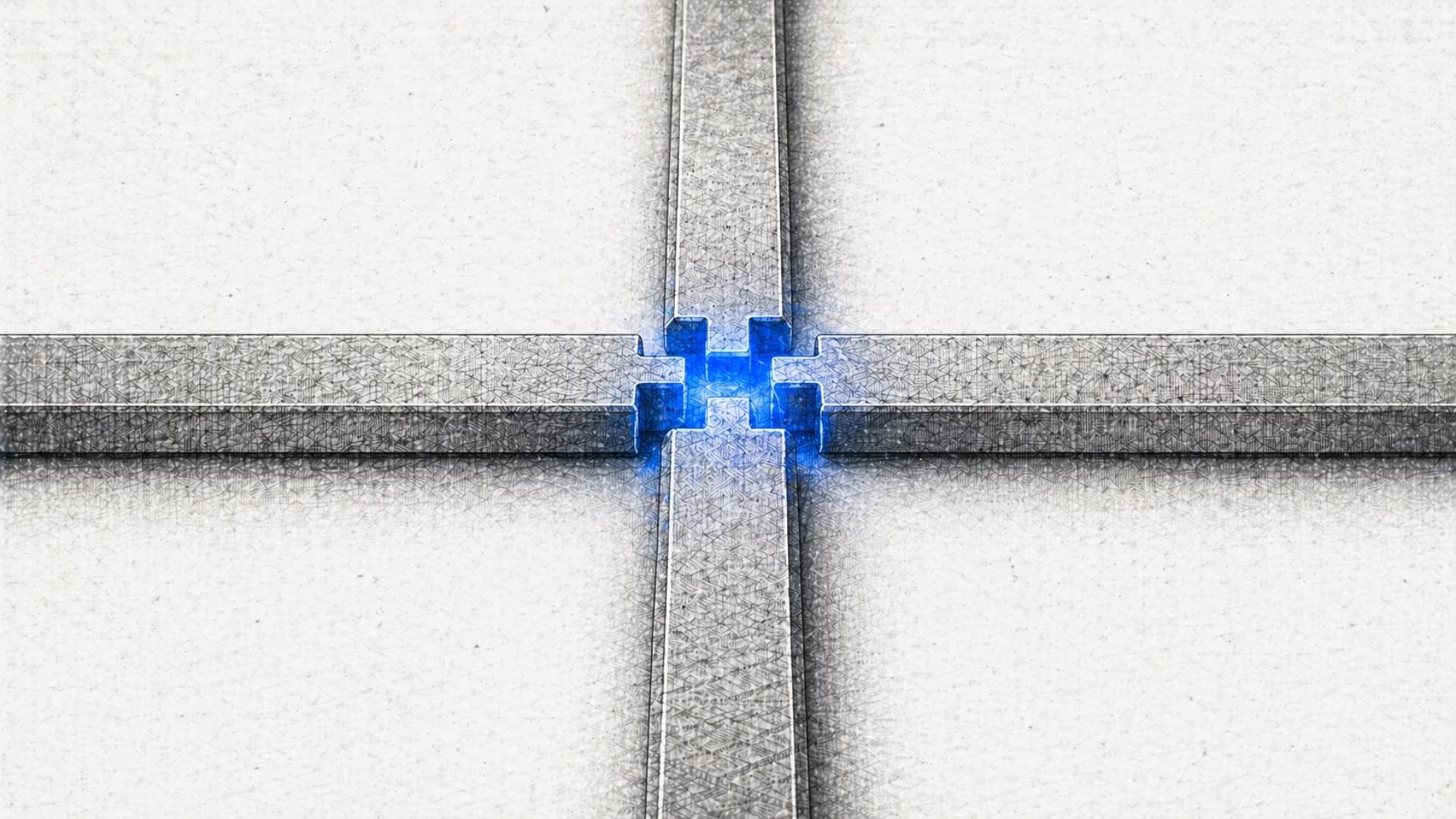

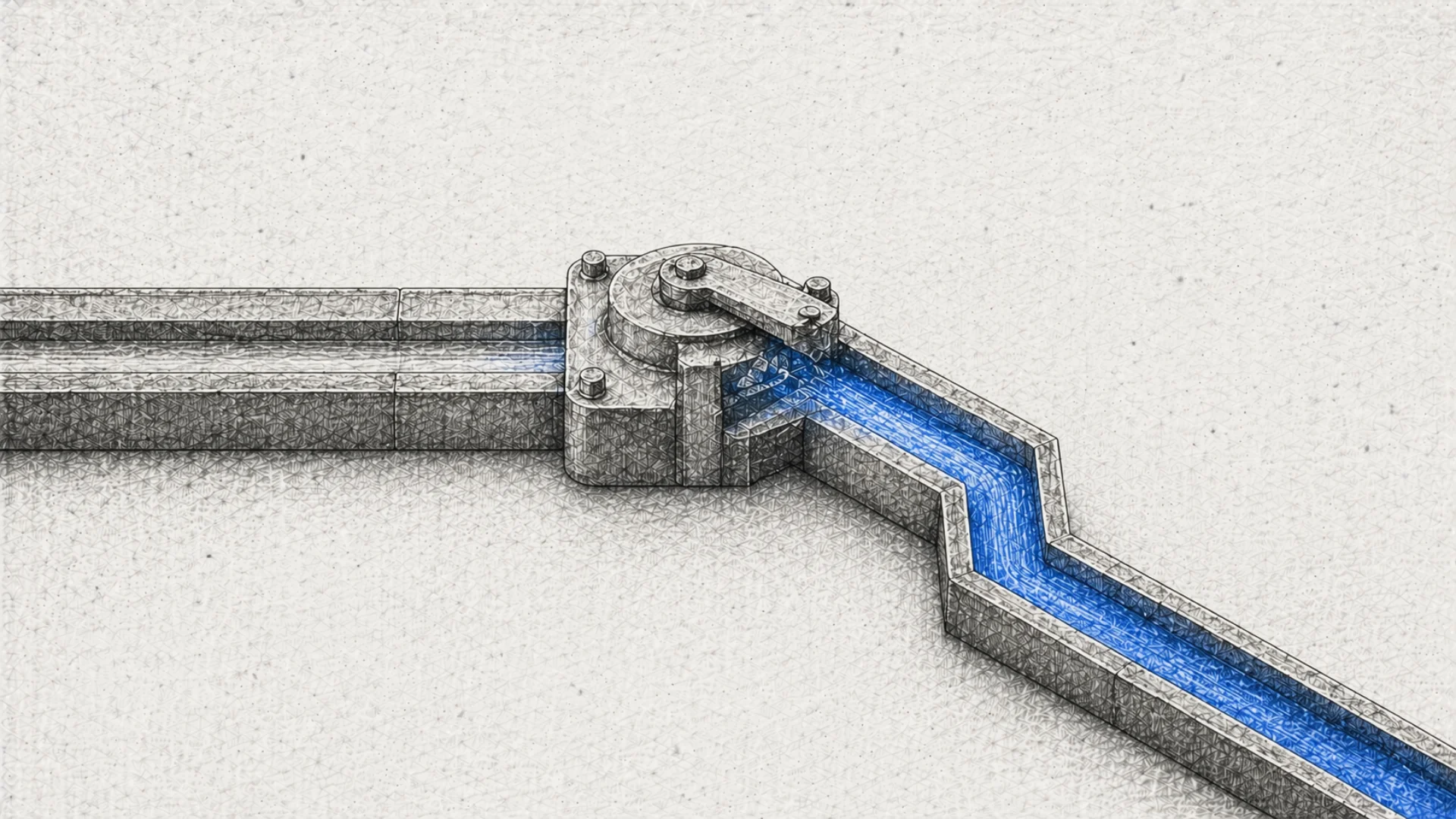

The company builds switching platforms and networking systems designed specifically for hyperscale cloud operators and the rising class of NeoCloud providers. Some deployments are turnkey. Others are deeply customized. Both paths revolve around one goal. Deliver performance, power efficiency, and deployment speed for AI data centers that are expanding faster than most infrastructure was ever designed to handle.

Under the hood, Nexthop AI integrates open source network operating systems like SONiC and FBOSS with merchant switching hardware engineered for hyperscale environments. The result is networking infrastructure built for scale out clusters, scale across architectures, and the front end traffic that keeps AI workloads moving without friction.

From Santa Clara to Seattle, Vancouver, and Bengaluru, the team is building the pipes that let AI flow at industrial scale. It may not grab headlines the way a new model release does, but anyone who has spent time inside cloud infrastructure knows the truth. When the network moves faster, everything moves faster.